👋Good Morning! AI is no longer sitting at the edges of companies as an experimental layer, it’s moving into the operational core. Spotify says its best developers aren’t writing code anymore, Airbnb has shifted a third of customer support to AI, and a senior Anthropic safety researcher has exited with a public warning about the broader trajectory of the field. These aren’t speculative pilots; they’re signals that AI is restructuring workflows, decision-making, and internal power dynamics inside major tech companies.

🏠 Airbnb Says AI Now Handles ~33% of Customer Support in North America

Airbnb revealed this week that its proprietary AI agent is now resolving roughly one-third of customer support issues across the U.S. and Canada, and the company plans to scale that capability globally over the next year.

What’s happening:

Airbnb has shifted a significant slice of routine support interactions, things like booking changes, refunds, and common disputes, to a custom-built AI system, which leadership claims reduces costs and improves service consistency. The rollout so far covers major languages in North America, with a target of handling over 30% of global tickets using both AI chat and voice tools where human agents also operate.

Strategic positioning:

CEO Brian Chesky framed this not just as automation but as foundational to an AI-native experience. The company’s recent hire of CTO Ahmad Al-Dahle (formerly leading generative AI at Meta) signals that Airbnb wants AI to extend beyond support, with future features such as conversational search and deeper personalization baked into the app itself.

Airbnb argues its proprietary data, including ~200 M verified profiles and ~500 M reviews, gives its AI an edge over generic chatbots and standalone models, allowing it to integrate tightly with bookings, messaging, and operations at scale.

The trade-offs & risks:

Cost vs experience: Shifting support tasks to AI can meaningfully cut operating expenses, but the quality of resolution matters more than speed in travel, mishandled edge cases could erode trust if escalation paths aren’t smooth.

Human impact: Even if Airbnb keeps humans in the loop for complex issues, replacing a large share of support touches on workforce implications and long-term customer perception.

Execution risk: Delivering not just assistant-style responses but accurate, context-aware resolutions at scale remains non-trivial. Early deployment in a controlled region is one thing; global multilingual rollout is another.

Practical takeaway:

This isn’t hype, Airbnb is materially shifting its backend to AI and positioning the platform to lean on automation as a competitive lever. But converting that shift into better customer outcomes and maintaining trust, especially in hospitality, where nuance matters, will determine whether this becomes a net advantage or a source of friction.

🎧 Spotify Says Its Best Developers Haven’t Written Code Since December - AI Is Reshaping Engineering

Has AI coding crossed a real inflection point? Spotify seems to think so. On its fourth-quarter earnings call, co-CEO Gustav Söderström said that the company’s best developers “have not written a single line of code since December,” describing a workflow where generative AI handles the bulk of implementation work.

At the center of this shift is an internal system called Honk, which uses Claude Code to accelerate coding and deployment. Engineers can prompt the system to fix bugs or add new features, even from Slack on a phone during their morning commute. Once the task is complete, Claude pushes a new version of the app back to the engineer for review and merging to production, potentially before they even arrive at the office. Spotify credits this setup with speeding up coding and deployment “tremendously.”

This isn’t happening in a vacuum. Spotify highlighted that it shipped more than 50 new features and changes to its streaming app throughout 2025, including AI-powered Prompted Playlists, Page Match for audiobooks, and About This Song, all launched in recent weeks. The company frames AI not as a peripheral tool, but as an embedded layer driving both internal velocity and outward-facing product innovation.

Söderström was explicit that this is “just the beginning,” suggesting Spotify sees AI development as compounding rather than plateauing. He also pointed to what he views as a defensible edge: a unique music-related dataset that other large language models cannot easily commoditize. Unlike factual domains (e.g., Wikipedia-style knowledge), music preference is subjective and culturally variable. What qualifies as “workout music” differs by geography and taste, hip-hop in the U.S., EDM in parts of Europe, heavy metal in Scandinavia. Spotify argues that this scale of behavioral and contextual music data doesn’t exist elsewhere, and that retraining its models on that dataset continually improves performance.

The company also addressed AI-generated music directly. Artists and labels can indicate in a track’s metadata how a song was created, while Spotify continues to police the platform for spam. That signals a balancing act: embracing AI tooling internally while managing potential downstream distortions in its content ecosystem.

Practical takeaway: The headline that top developers aren’t writing code is provocative, but the more important shift is structural. Engineers are moving from manual coding toward prompting, reviewing, and merging AI-generated output. That can materially increase velocity, as Spotify claims. But it also concentrates risk in specification and oversight. If quality control slips, AI can propagate errors at scale just as quickly as it ships features. The real test isn’t whether code is written by humans, it’s whether AI-driven workflows produce durable, maintainable software without eroding technical depth over time.

⚠️ Anthropic Safety Researcher Quits, Warns “The World Is in Peril”

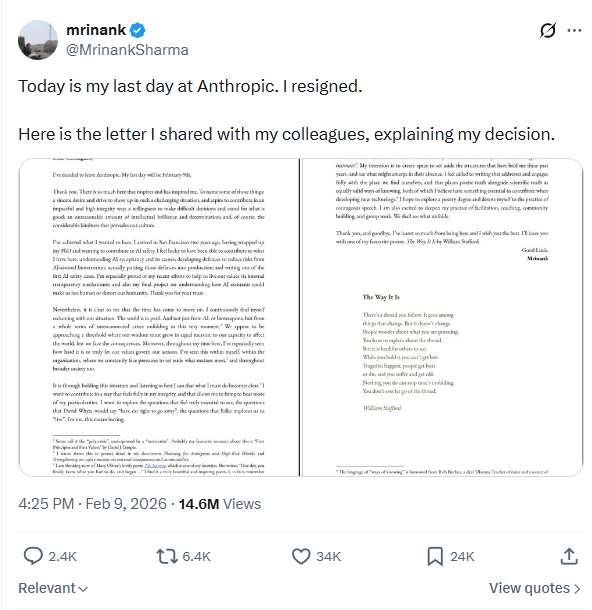

A lead safety researcher at Anthropic, Mrinank Sharma, has abruptly resigned and posted a strikingly philosophical letter warning of broad global threats, including AI and bioweapons, and questioning the alignment between stated values and actions within the company. The letter, described as cryptic and poetry-laden, was shared publicly on X and emphasized a belief that humanity is approaching a threshold where wisdom must grow as fast as our technological power.

Read the letter here

Sharma had headed Anthropic’s Safeguards Research Team since its formation, a group focused on adversarial robustness and defenses against misuse of AI. In his note, he reflected on the difficulty of letting values truly govern actions and hinted at internal pressures that force compromises on core principles. He wrote that the world is “in peril” not just from AI but from a “whole series of interconnected crises,” though he did not specify concrete examples or cite internal incidents.

The resignation letter is notable less for specific allegations than for its tone: ambiguous warnings, philosophical musings, and a pivot toward a personal path. Sharma said he plans to pursue work aligned with integrity and “courageous speech”, even mentioning an interest in a poetry degree and citing a book tied to an unconventional philosophical school.

This departure comes amid broader turnover in the AI safety and research community, where exits occasionally draw attention both for what is said, and what isn’t. In Sharma’s case, without explicit claims about specific safety lapses or corporate malfeasance, the letter reads more like a personal reckoning than a detailed industry critique. But its public framing, a warning about existential peril from technology and other threats, adds fuel to ongoing debates about how AI companies manage safety, values, and competitive pressures.

Practical takeaway: This isn’t a whistleblower exposé with a list of specific failures, it’s an evocative, philosophical resignation that raises broader questions about how teams dedicated to safety think about their role within fast-moving AI firms. The substance matters: vague public warnings can attract attention, but without concrete details they do little to move regulatory or technical discussions forward. Whether this signals deeper tensions at Anthropic or simply one researcher’s personal pivot remains unclear.

🧩 Closing thought

The through line isn’t hype, it’s integration. AI is steadily taking over structured execution work while humans shift toward oversight and judgment, but that shift concentrates both leverage and risk. Productivity can increase dramatically, yet so can dependency, blind spots, and governance pressure. The next phase of this cycle won’t be defined by how impressive the demos are, it will be defined by whether companies can scale AI without compromising quality, resilience, or trust.